I want to transfer my Presearch node to my Titan, but there is an issue when I try to deploy the Presearch Instance.

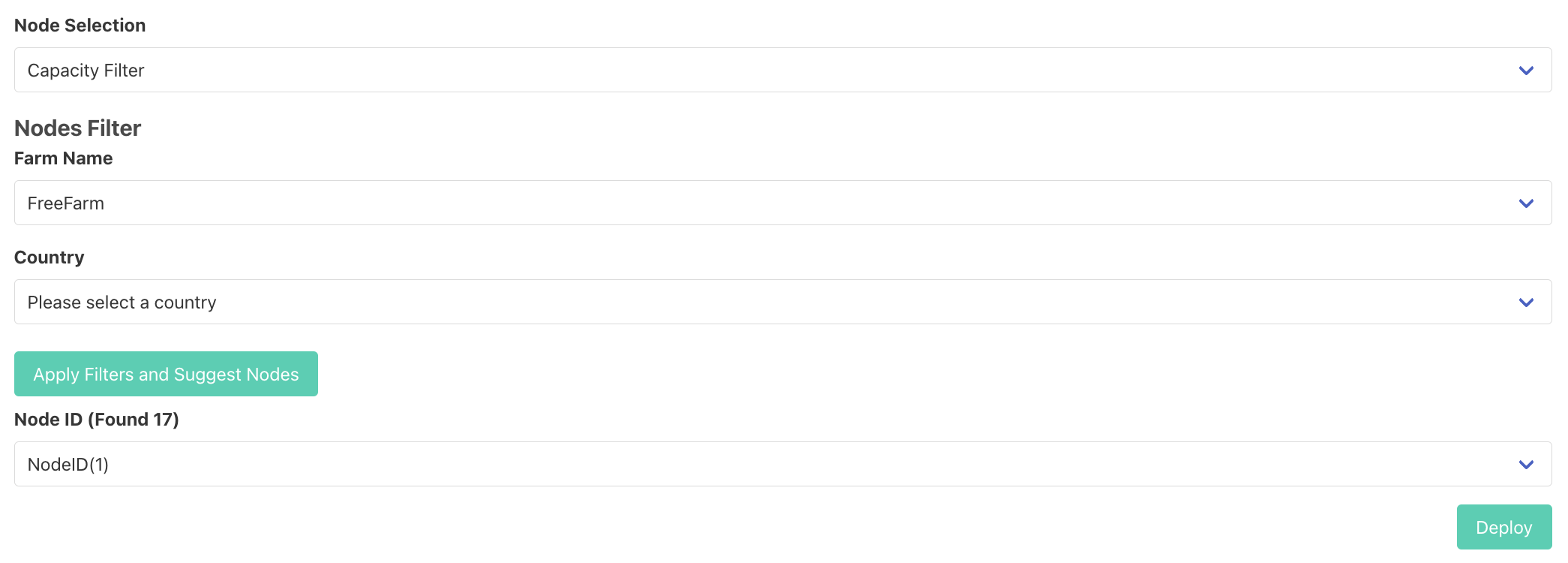

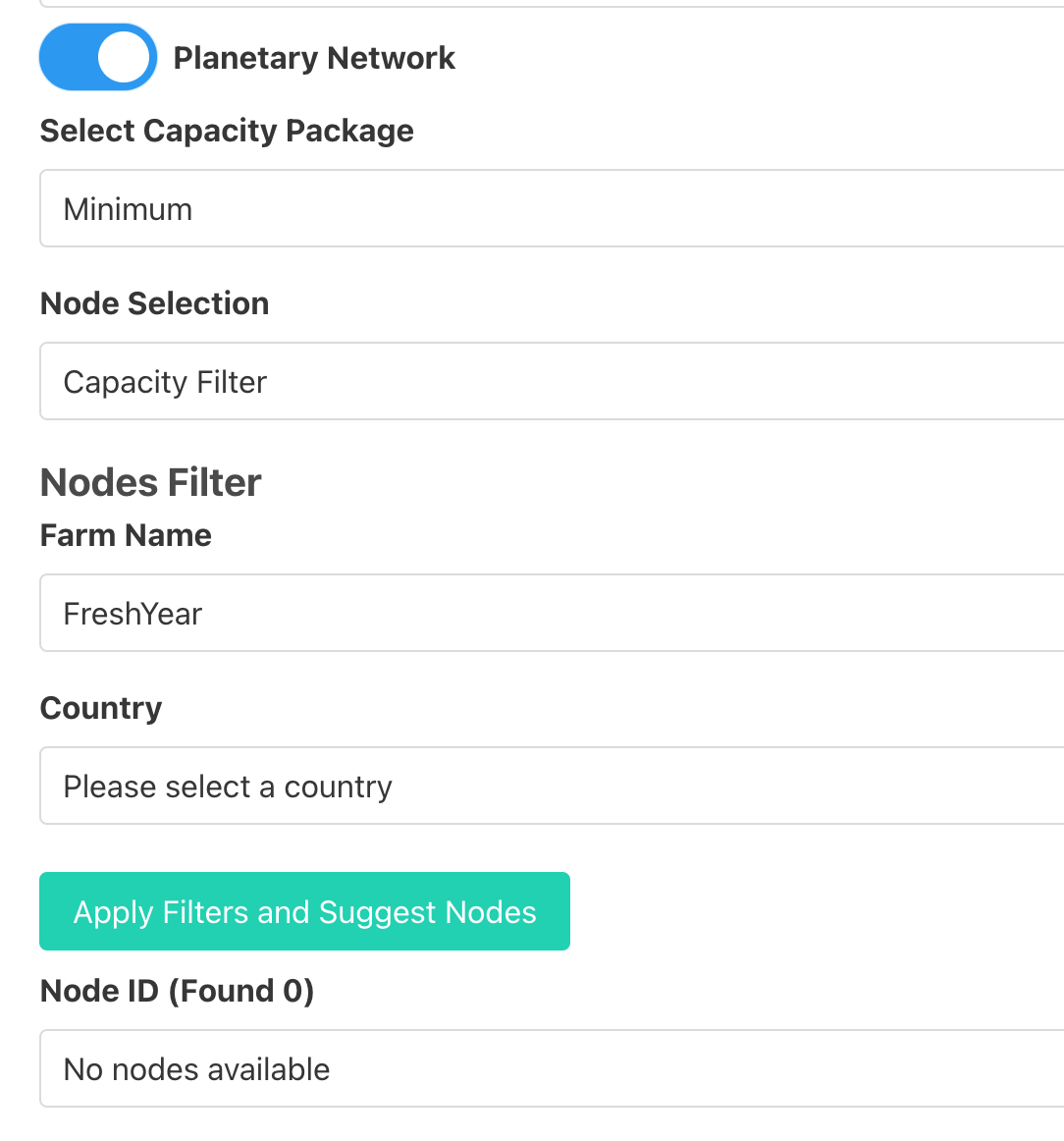

I started by navigating to https://play.grid.tf/#/presearch. Then I created a profile by specifying Profile Name, Mnemonics, and Public SSH key. After the profile is created, I go under Presearch Restore tab and enter private and public restore keys but “Deploy” button is still disabled and there’s no error message displayed.

To troubleshoot, I went to the profile I created and noticed that the Twin ID in my profile is different from Twin ID on my node. I don’t know whether that is the issue or not. Or it has something to do with the SSH key I provided. I didn’t know where to get the SSH public key from, so I generated one on my Macbook.

Has anyone successfully created a Presearch node on his/her farm?