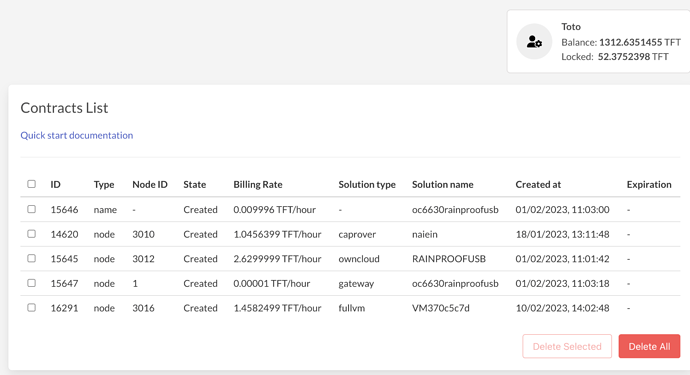

A caprover was deployed and a wordpress instance was launched.

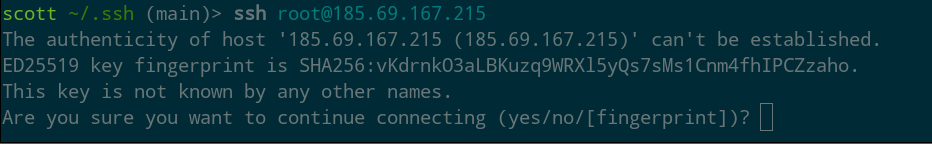

A few issues have developed so I am trying to login to the caprover instance but it seems to refuse incoming ssh connections.

ssh: connect to host 185.69.167.215 port 22: Connection refused

The JSON file looks as follows:

{

"version": 0,

"contractId": 14620,

"nodeId": 3010,

"name": "CRLnaiein",

"created": 1674043928,

"status": "ok",

"message": "",

"flist": "https://hub.grid.tf/tf-official-apps/tf-caprover-main.flist",

"publicIP": {

"ip": "185.69.167.215/24",

"ip6": "",

"gateway": "185.69.167.1"

},

"planetary": "",

"interfaces": [

{

"network": "NWnaiein",

"ip": "10.200.2.2"

}

],

"capacity": {

"cpu": 1,

"memory": 1024

},

"mounts": [

{

"name": "data0",

"mountPoint": "/var/lib/docker",

"size": 53687091200,

"state": "ok",

"message": ""

}

],

"env": {

"SWM_NODE_MODE": "leader",

"CAPROVER_ROOT_DOMAIN": "naiein.com",

"CAPTAIN_IMAGE_VERSION": "v1.4.2",

"PUBLIC_KEY": "ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQCwa04MlP4jib8+UdKMOzoWUfAFqC2nGrLFlImSqdQUDdjDtfgzVAYcbjtex0hncP2rotX76uCnVdzWMIoJMMm+xNkHlkbUB9GT2LAijHdKZyxthwDielV1hRvUBVSsSB4xNGGgafSIoYF+qsGL9NftlqLv04tsVgL75mtJ9i82FJ6GiZ/mh64AsvWsF8IJHhm+O3y/Su1ta1scLzELzrrn8kEGRftkvJl3uQwStAi8/N7/WWYRb0fO7uuV1pKJg5kT5gCMzhjLS2Mwruo0bkE69p4y/N3NIbH2LNsKPWueyQAwd24e7zeCPNuY6Sz+RIS/UbBIahL68NNA3alOLwRT5KjzSXaI9fiUSQSyRi33H6/1NB6VJzXEENpXqvOe+Bj5N5GgxDZ0bB1sjci+/cPLDg8OnqBALqe62AtgLx998goowuItmIACBYVFsELpECazE1buTup3Fualy+IJi8x1yIxBwr5zKC8jKD7rXkFBmUtyd7kYddgRqzX27teyen0= toto@Totos-MacBook-Air.local",

"DEFAULT_PASSWORD": "///"

},

"entrypoint": "/sbin/zinit init",

"metadata": "{\"type\":\"vm\",\"name\":\"naiein\",\"projectName\":\"CapRover\"}",

"description": "caprover leader machine/node",

"corex": false

}

http://captain.naiein.com says that nothing is there yet and https://captain.caprover.naiein.com/ times out. These used to be fine…

The wordpress editor is available though via http://naiein-web-wordpress.caprover.naiein.com/

But this is useless if I can’t modify the caprover deployment since I need to change a few php files and check of error logs.

Am I missing something here and how can I access the deployment?